Blog

Authenticity in Business

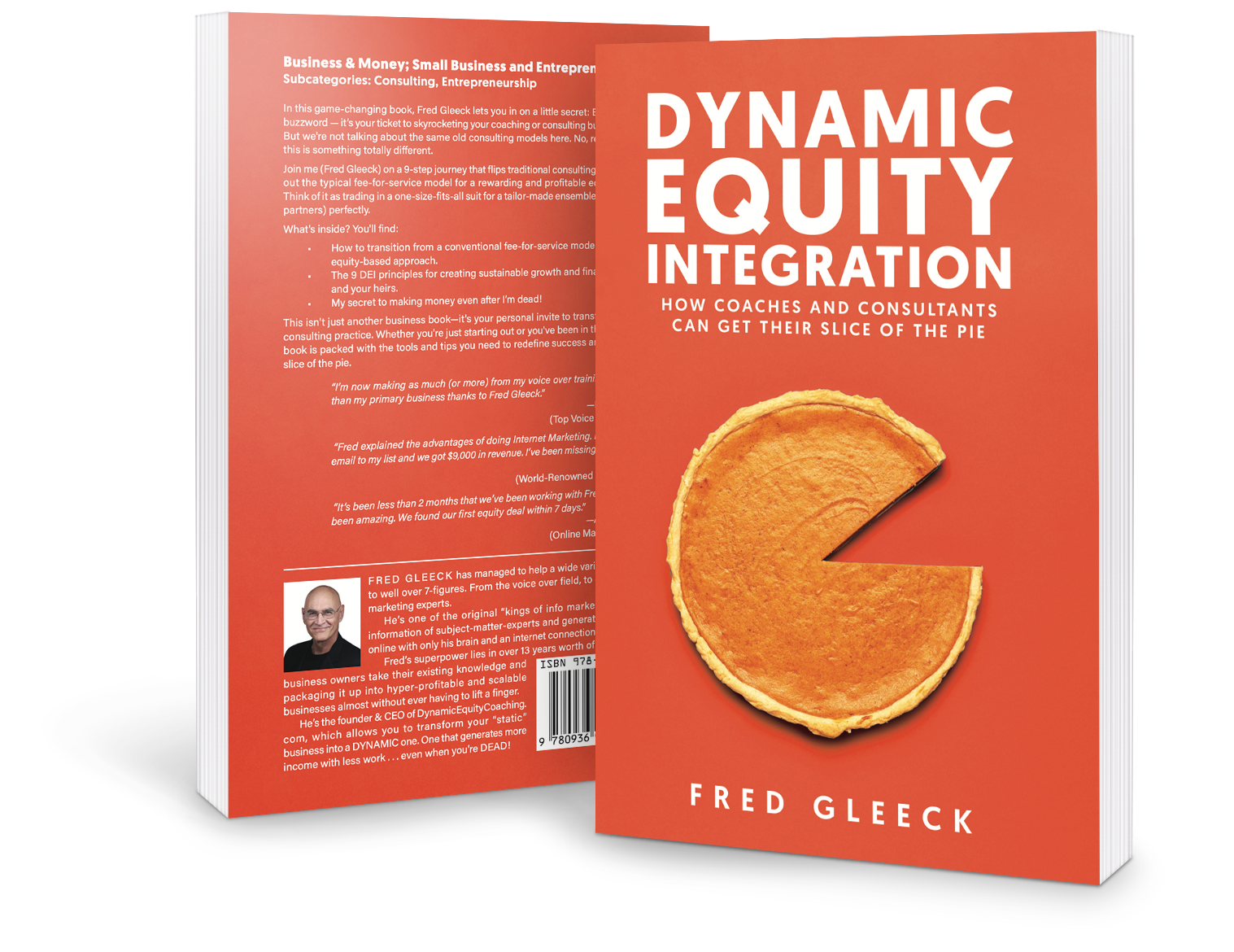

Dynamic Equity Integration | Authenticity in Business ...more

Business

January 12, 2024•0 min read

DOWNLOAD NOW!

I'd like to download this NEW BOOK right now, and discover how to start getting equity. $19.00 - Now Only $9.95!

Copyright 2024 FredGleeck.com

This site is not a part of the YouTube, Google or Facebook website; Google Inc or Facebook Inc.

Additionally, This site is NOT endorsed by YouTube, Google or Facebook in any way.

FACEBOOK is a trademark of FACEBOOK, Inc. YOUTUBE is a trademark of GOOGLE Inc.

* Income Income Disclaimer: The information provided on this website is for educational and informational purposes only. Any income or financial results mentioned on this website are not guaranteed. Individual results may vary and are based on various factors such as effort, skills, experience, and market conditions. The income figures and examples used on this website are not intended to represent or guarantee that anyone will achieve the same results. We cannot and do not make any guarantees regarding the level of success or income that you may experience. Your success depends on your own efforts, dedication, and commitment to applying the information provided. Any testimonials, case studies, or success stories shared on this website are exceptional results, and are not necessarily representative of the average person's experience. We cannot guarantee that you will achieve similar results. It is important to note that earning potential and income outcomes can vary significantly from person to person. The information provided on this website is not financial or legal advice. Before making any financial decisions, it is recommended that you consult with a qualified professional. By using this website, you agree to release and hold harmless the website owner, its affiliates, employees, and partners from any and all liability arising from your use of the information provided. Please use your own discretion and judgement when making financial decisions based on the information provided on this website.